Cyber risk has become an exercise in interpretation rather than reduction. The industry has over-optimised for modelling, scoring, and explaining exposure, often driven by consulting-led approaches that rely heavily on subjectivity and narrative. This piece argues that the real problem is upstream: data acquisition, normalisation, and comparability. Cyber Tzar was built to industrialise that problem, collapsing the time between discovery and action, and shifting organisations away from “bean counting” risk towards actually reducing it. The distinction is simple: attackers exploit exposure, not models.

Contents

- Contents

- 1. Introduction

- 2. The Industry Didn’t Fail Because It Lacked Frameworks

- 3. The Consultant Paradox

- 4. Subjectivity Dressed Up as Insight

- 5. The Real Problem Is Upstream

- 6. Third-Party Risk: Where This Breaks Completely

- 7. Bean Counting vs Doing Something

- 8. Why Cyber Tzar Exists

- 9. Yes, Context Matters. But Not First

- 10. The Speed Advantage

- 11. The Uncomfortable Consequence

- 12. SMEs Were Never Meant to Win This Game

- 13. Subjectivity vs Empirical Comparison

- 14. Conclusion: No Cyber Idea

- 15. Epilogue: Final Thought

- 16. References

1. Introduction

The Cyber Tzar article explains where the industry is going wrong. This is the more personal version of that argument. That piece sets out the company’s view of the problem and the practical approach to solving it: “Cyber Risk Isn’t a Spreadsheet Exercise: Why Finding and Fixing Exposure Matters More Than Arguing Its Price“.

I did not build Cyber Tzar because cyber lacked frameworks or intelligence. It has both in abundance. What it lacks is efficiency in getting from uncertainty to action. Too much of the industry is built around interpreting risk rather than exposing it, shaped by consulting models that reward narrative, subjectivity, and prolonged engagement.

I have seen this from the inside. Large teams, long timelines, repeated assessments, and a lot of effort spent turning the same underlying data into slightly different stories. Useful, sometimes. Necessary, occasionally. But rarely fast enough and often not directly tied to reducing real-world exposure.

Cyber Tzar comes from a different premise. If you solve the data problem properly, most of the argument disappears. What remains is straightforward: what is exposed, how it compares, and what needs to be fixed. Everything else is secondary.

2. The Industry Didn’t Fail Because It Lacked Frameworks

Cyber is not short of intelligence. It is not short of frameworks, models, scoring systems, quantification methods, or advisory capability. The industry has effectively built three overlapping layers: technical scoring, financial quantification, and benchmarking. Each serves a purpose. Scoring prioritises vulnerabilities. Quantification translates risk into financial terms. Benchmarking provides relative context. The problem is not that these exist. The problem is that organisations are often forced to navigate all three before taking action.

If anything, it is saturated with them. FAIR, CVSS, CVaR, ratings platforms, benchmarking systems, and maturity models. You can model cyber risk six different ways before lunch and still have time to argue about which one is more “aligned to the business”. That is not the problem. The problem is that somewhere along the way, the industry decided that describing risk well was the same thing as managing it. It isn’t.

3. The Consultant Paradox

I have worked with some very capable people in consulting. People who built entire technology practices inside what were once audit firms. People who have run major parts of government. People who have operated at levels where decisions actually matter. The good ones all know the same thing. There is a structural problem in consulting. Not because consultants are stupid. Quite the opposite. Many are exceptionally bright. But the model produces the wrong output. You are not primarily paying for a reduction in risk. You are paying for interpretation, narrative, structure, and presentation. Which is why all the old jokes exist and persist. You can dress it up however you like, but if the primary output of your cyber programme is a deck, then you have bought a narrative, not a capability.

4. Subjectivity Dressed Up as Insight

One of the more subtle problems is subjectivity. Two consulting firms can look at the same environment and give you two different answers. Not wildly different, but different enough to justify their methodology, their approach, their experience. That is not an accident. It is a feature. Because subjectivity is where the margin lives.

Many quantification models introduce subjectivity at the point where they attempt to translate technical exposure into business impact. Asset value, loss magnitude, and scenario modelling all require interpretation. That is not inherently wrong, but it creates inconsistency. Two organisations can model the same exposure and arrive at very different answers.

You can debate risk ranges. You can debate likelihood. You can debate impact. You can debate scenarios. You can debate interpretation. What you cannot debate quite so easily is what is actually exposed. That is a much less comfortable place to operate commercially.

5. The Real Problem Is Upstream

The industry behaves as if the difficult part is understanding cyber risk. It isn’t. The difficult part is collecting the data, normalising it, correlating it, doing it consistently across environments, and doing it at scale. Everything else is downstream of that. In practice, the organisations that manage cyber risk best are not the ones with the most elegant models. They are the ones with the most complete, current, and comparable data. Once you have done that properly, most of the “complexity” people talk about collapses into something much simpler: what is wrong, how bad it is, and what we fix first. The rest is commentary.

6. Third-Party Risk: Where This Breaks Completely

If you want to see this problem in its purest form, look at supply chain and third-party risk. The current model is absurd when you step back from it. You have teams sending questionnaires, chasing responses, validating answers, building spreadsheets, producing reports, escalating issues, and repeating the process. Over and over again. At scale.

I have lived this. At Bupa, we had a team of around thirty people working through supplier assessments. It took close to two years to get meaningful coverage. Look at large government departments, and the same story repeats. Hundreds of suppliers. Years of effort. Significant cost. And what are those teams actually doing? They are measuring risk. Documenting risk. Reporting risk. They are not, in any meaningful way, reducing it. An interesting secondary effect has emerged in practice.

Organisations using Cyber Tzar for supply chain assessment have consistently seen improvements in their suppliers’ security posture. Not because of enforcement alone, but because of visibility. When suppliers understand they are being compared, benchmarked, and scored alongside peers, behaviour changes. Call it competitive pressure, call it fear of missing out, the outcome is the same: measurable improvement without endless audit cycles. It turns out that when you make risk visible, people fix things.

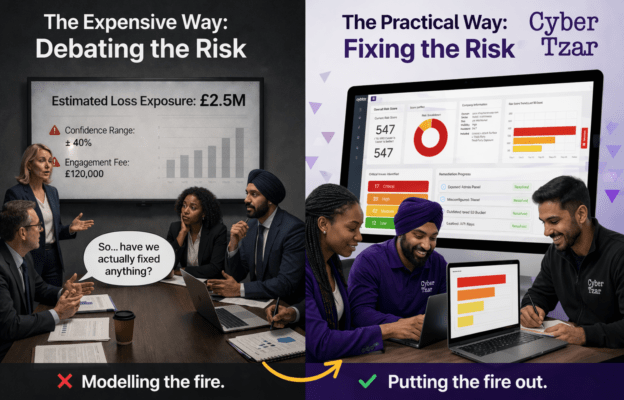

7. Bean Counting vs Doing Something

There is a difference between understanding risk and acting on it. The industry has blurred that line. A lot of what passes for cyber risk management today is, in practice, bean counting. Counting issues. Counting suppliers. Counting scores. Counting findings. It looks like progress because the numbers change. But the underlying exposure often does not. That is the bit that matters.

8. Why Cyber Tzar Exists

Cyber Tzar exists to deal with the actual bottleneck. Not interpretation. Not presentation. Data. One of the explicit goals behind Cyber Tzar was to democratise cyber risk management, not as a slogan, but as a practical shift: removing dependency on expensive intermediaries and giving organisations direct, usable visibility of their exposure.

The aim is simple. Collapse the time between “we don’t know what our exposure looks like” and “we have a clear, comparable, actionable view of it” as much as possible. That means pulling in multi-variate data across infrastructure, exposure, breach signals, and external footprint, correlating it with threat intelligence, benchmarking it against a large dataset, and producing a consistent, comparable output. Not in six months. Not after a programme. Immediately.

9. Yes, Context Matters. But Not First

There is always a pushback at this point. “But what about the business context? What about asset value? What about impact?” Of course, those things matter. But they matter after you have established what is actually there. It is far easier to assign value to known exposure than to discover unknown exposure in the first place. If you have assessed 2,000 suppliers and understand, at a baseline level, their relative risk posture, then layering business context on top becomes tractable. If you do not, you are building models on incomplete information and calling it insight.

10. The Speed Advantage

This is the real difference, and it is the one people tend to underestimate. Speed. The advantage is not just speed, although compressing months of assessment into hours matters. The real shift is what happens next. Organisations are not left with a report and a dependency on consultants to interpret it. They are given clear, prioritised remediation paths and can fix issues themselves. That is a fundamentally different operating model.

Traditional models take months or years to build a usable view of risk. If your understanding of exposure is always lagging reality, then your decisions are always lagging as well. Static assessments age badly. Exposure changes daily. Any model that is not continuously refreshed becomes a historical artefact rather than a management tool. What we have done is reduce that initial discovery and comparison problem to something that happens quickly and continuously. Not perfectly. But consistently. And consistency beats perfection in this space every time.

11. The Uncomfortable Consequence

If you do this properly, much of the existing work disappears. Large parts of what teams currently do in third-party risk and assurance, and parts of governance, simply become unnecessary. That creates an obvious question. What do those teams do instead? The answer is not complicated. They stop counting risk. They start dealing with it. Working with suppliers. Fixing issues. Making decisions. Prioritising remediation. Driving outcomes. The work becomes harder, but also more valuable.

12. SMEs Were Never Meant to Win This Game

Large enterprises can absorb inefficiency. They can afford long consulting engagements. They can afford layers of advisory. They can afford to run programmes that take months or years to mature. Most organisations cannot. And yet they are exposed to exactly the same threat landscape. Same internet. Same attackers. Same vulnerabilities. The idea that meaningful cyber risk management requires enterprise-scale budgets is fundamentally broken. Which is why democratising access to visibility, benchmarking, and prioritisation is not a nice-to-have. It is necessary.

13. Subjectivity vs Empirical Comparison

This is where the shift really happens. Traditional approaches lean heavily on subjectivity. Interpretation, context, judgement. There is a place for that. But it should not be the starting point. What we have done is push as much of the process as possible into empirical comparison. Not “what do we think this means?” But: “How does this actually compare across thousands of organisations, systems, suppliers?” That reduces debate. It reduces bias. And most importantly, it reduces the time to a decision.

14. Conclusion: No Cyber Idea

I keep coming back to the same phrase. No cyber idea. It is not about intelligence. There are plenty of intelligent people in this space. It is about the approach. If your starting point is modelling before measuring, debating before identifying, and presenting before fixing, then you are not managing cyber risk. You are managing a story about cyber risk. We do not just compress time. We remove the need to keep paying someone else to tell you what to do next. And that is fine, right up until something goes wrong.

15. Epilogue: Final Thought

Use consultants where they add value. Use frameworks where they help. Use quantification when it informs decisions. But be clear about the objective. Cyber risk management is not about building better explanations of exposure. It is about reducing it. Find it. Understand it. Fix it. If you are not doing that, then regardless of how sophisticated it sounds, it comes back to the same thing. No cyber idea.

16. References

- Horkan, W. Cyber Risk Quantification: Towards a Cyber Risk Score.

https://horkan.com/2025/04/30/cyber-risk-quantification-towards-a-cyber-risk-score - Horkan, W. The Role of Cyber Risk Quantification, Scoring, and Benchmarking in Cyber Insurance.

https://horkan.com/2025/04/28/the-role-of-cyber-risk-quantification-scoring-and-benchmarking-in-cyber-insurance - Horkan, W. A History of Cyber Risk Quantification.

https://horkan.com/2025/04/02/a-history-of-cyber-risk-quantification - Horkan, W. Mapping Cyber Risk Approaches: Bridging Quantification and Scoring.

https://horkan.com/2025/04/09/mapping-cyber-risk-approaches-bridging-quantification-and-scoring - FIRST. Common Vulnerability Scoring System (CVSS). Available at: https://www.first.org/cvss/

- The Open Group. FAIR (Factor Analysis of Information Risk). Available at: https://www.opengroup.org/standards/fair

- National Institute of Standards and Technology (NIST). Risk Management Framework (RMF).

Available at: https://www.nist.gov/ - National Institute of Standards and Technology (NIST). Cybersecurity Framework (CSF).

Available at: https://www.nist.gov/cyberframework - BitSight Technologies. Security Ratings Methodology.

Available at: https://www.bitsight.com/ - SecurityScorecard. Security Ratings Platform.

Available at: https://securityscorecard.com/ - Mayer-Schönberger, V., & Cukier, K. Big Data: A Revolution That Will Transform How We Live, Work, and Think. London: John Murray.