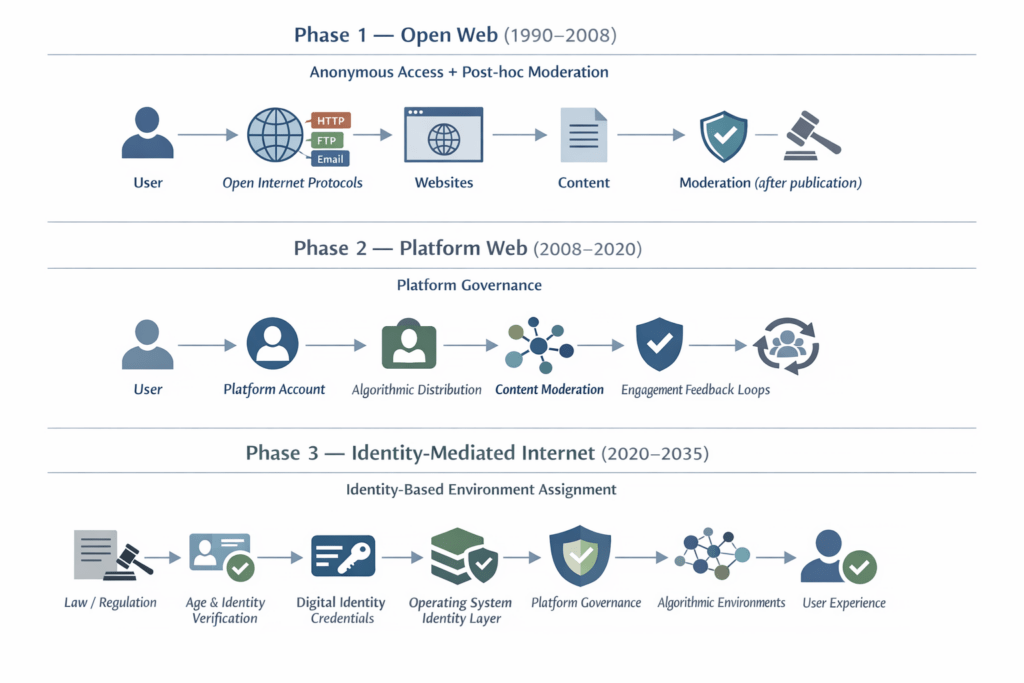

Governments around the world are introducing age-verification and youth social-media laws, but these policies may be doing far more than protecting children. They are quietly pushing identity into operating systems, app stores, and the core infrastructure of the internet, shifting governance down the stack and creating new enforcement chokepoints. Along the way, they reshape platform power, favour large incumbents, and redefine how users access digital environments. As illustrated in “Evolution of Internet Architecture (1990–2035)”, this may signal a transition toward an “identity-mediated” web. This article documents those changes, drawing on historical precedents from UK identity systems (including the UK identity card programme) and US telecommunications, and comparative developments across multiple jurisdictions, to show how independent regulatory efforts are converging on a shared architectural shift.

Evolution of Internet Architecture (1990–2035) Diagram

The development of age verification infrastructure can be understood within a broader historical evolution of the internet. Early internet systems prioritised anonymous access and decentralised protocols. The rise of large social media platforms introduced centralised governance through algorithmic moderation. Emerging regulatory frameworks may now be pushing the internet toward a third phase in which identity attributes determine access to digital environments.

Structurally, these models align with the following primary control layer.

| Phase/ERA | Primary Control Layer and Aspects |

|---|---|

| Phase 1 (1990–2008): Open Web | Protocols: anonymous access and post-publication moderation. |

| Phase 2 (2008–2020): Platform Web | Platforms: centralise governance via accounts and algorithms. |

| Phase 3 (2020–2035): Identity-Mediated Web | Identity Infrastructure: Verifies user attributes (starting with age), that gate access and shape environments before interaction. |

Executive Summary

Over the past several years, governments around the world have introduced a growing number of laws aimed at protecting children online. These include social media age restrictions, online safety legislation, and requirements for platforms to prevent minors from accessing harmful content.

Taken individually, these policies appear to address specific problems within particular jurisdictions. Viewed collectively, however, they reveal a broader shift in how the internet itself is being governed.

Historically, the internet operated as an open network in which users could participate anonymously, and content moderation occurred after information was published. The emerging regulatory model increasingly reverses that relationship. Instead of moderating content after interaction occurs, platforms are being required to classify users, most commonly by age, before determining what digital environments they may access.

This shift is driving the development of new technical infrastructure across several layers of the technology stack. Age verification systems, digital identity credentials, operating-system identity services, and platform governance frameworks are gradually combining into a layered architecture in which user attributes shape the information environments users encounter online.

In practice, this means that the internet is beginning to move from a model of open access followed by moderation toward one of identity-mediated access and environment design. Age verification legislation may therefore represent the first large-scale deployment of identity attributes as infrastructure within the operational architecture of the internet.

The infrastructure emerging around age verification and operating-system identity layers can also be understood through the lens of Digital Public Infrastructure (DPI). DPI frameworks typically describe three foundational capabilities that enable participation in digital society: authentication (identity), transactions (payments), and data exchange between institutions.

Age verification systems represent a specialised form of authentication infrastructure embedded directly into the operational stack of the internet. When identity attributes become embedded within browsers, operating systems, and digital identity wallets, they begin to function not merely as regulatory compliance mechanisms but as components of a broader infrastructure for governing digital participation. Age verification is the first identity attribute to be widely deployed within this emerging system, but the infrastructure being built could support additional forms of classification in the future.

This article examines the legislative origins of this shift, the technical mechanisms being developed to implement it, and the broader implications for anonymity, platform power, algorithmic governance, and the future structure of the web.

The central argument is not that governments are intentionally redesigning the internet. Rather, the interaction between child-safety regulation, platform governance, and identity infrastructure is gradually producing a new form of digital architecture: one in which identity attributes, platform rules, and algorithmic systems increasingly operate together to structure online environments.

Understanding this transition is essential for evaluating the long-term consequences of the current wave of age-verification laws.

Contents

- Evolution of Internet Architecture (1990–2035) Diagram

- Executive Summary

- Contents

- Abstract

- 1. Introduction: The Sudden Appearance of an Age-Gated Internet

- 2. The Legislative Landscape

- 3. Timeline of the Global Age-Verification Wave (2015–2026)

- 3.1 2015–2016: The Pornography Problem

- 3.2 2017: The UK Digital Economy Act

- 3.3 2019: Collapse of the First Generation

- 3.4 2020–2021: From Pornography to “Online Safety”

- 3.5 2022: The Rise of Platform Governance

- 3.6 2023: The Online Safety Act

- 3.7 2024: Expansion Across the United States

- 3.8 2025: Enforcement Begins

- 3.9 2026: Global Expansion and Circumvention

- 4. The ID Card Debate in the United Kingdom: A Long Political “Debaclate”

- 4.1 Wartime Identity Cards and Their Abolition

- 4.2 The Modern Origins of the UK Identity Card Debate

- 4.3 The Identity Cards Act 2006

- 4.4 The Limited Success of Digital Identity: the Government Gateway

- 4.5 The Failure of Digital Identity: GOV.UK Verify

- 4.6 Delegated Authority and the Next Generation of Digital Identity

- 4.7 Security, Requirements, and the Practical Challenges of Identity Infrastructure

- 4.8 The European Contrast: France and Germany

- 4.9 Why the UK Debate Is Different

- 4.10 From ID Cards to Digital Identity

- 4.11 And onto Age Verification

- 4.12 The Reality of Identity in the UK: De Facto Systems and Structural Gaps

- 5. What People Think Is Happening

- 5.1 Narrative A: Child Protection

- 5.2 Narrative B: The “Censorship Industrial Complex”

- 5.3 Narrative C: Advertising Markets and Bot Detection

- 5.4 Narrative D: Platforms Shifting Verification to Infrastructure

- 5.5 The Limits of These Narratives

- 5.6 Emerging Policy Debate

- 5.7 Moderation vs. Environment Design

- 5.8 A Historical Precedent: Governance Migration in Telecommunications

- 5.9 Motivations and Structural Outcomes

- 6. What Is Actually Being Built

- 6.1 The Downward Migration of Internet Governance

- 6.2 The Old Internet

- 6.3 The Emerging Internet

- 6.4 Identity as Infrastructure

- 6.5 Diverging Architectural Directions

- 6.6 Competing Models of Identity Infrastructure

- 6.7 Attribute Proof Rather Than Disclosure

- 6.8 The Internet’s Anti-Identity Origins

- 6.9 Environment Design

- 6.10 Evaluating the Emerging Identity-Mediated Internet as Digital Public Infrastructure (DPI)

- 7. How OS-Level Age Verification APIs Work

- 8. Why Governments Target Operating Systems

- 9. Technical Challenges and Circumvention

- 10. Economic Incentives Behind the System

- 11. Behavioural Collapse and Identity Signals

- 12. Asymmetric Integration and the Post-LLM Web

- 13. The Internet Architecture Now Emerging: Part 1: Conceptual Explanation

- 13.1 Why Age Verification Appeared Everywhere at Once

- 13.2 Age Verification as the First Identity Attribute

- 13.3 Toward a KYC-Style Attribute Governance Model for the Internet

- 13.4 The Convergence of AI Governance, Platform Governance, and Age Verification

- 13.5 Uncoordinated Change Can Still Produce Structural Transformation

- 13.6 Geopolitical Convergence: Why Every Major Power Wants the Same Stack

- 14. The Internet Architecture Now Emerging II: System Architecture Walkthrough

- 15. Does This Actually Protect the People It Claims To?

- 16. Implications for the Future of the Internet

- 17. Conclusion: What Architecture Is Emerging?

- 18. Appendices

- 18.1 Appendix A: Global Map of Age Verification Laws

- 18.2 Appendix B: Technical Implementation Models

- 18.3 Appendix C: An Infrastructure Still in Formation

- 18.4 Appendix D: Bibliography

- 18.4.1 Legislation and Regulatory Frameworks

- 18.4.2 Digital Platform Governance and Regulation

- 18.4.3 Identity Infrastructure and Digital Identity Theory

- 18.4.4 Surveillance Capitalism and Algorithmic Governance

- 18.4.5 Youth Online Safety and Social Media Research

- 18.4.6 Internet Governance and Platform Power

- 18.5 Appendix E: Media, Government Reports, and Institutional Sources

- 18.6 Appendix F: Optional Suggested Reading

- 18.7 Appendix G: Media Timeline of Age-Verification and Online Safety Regulation (2015–2026)

- 18.7.1 2015–2017 — First Wave: Pornography Age Verification

- 18.7.2 2018–2019 — Collapse of the First Age Verification System

- 18.7.3 2020–2021 — Platform Harm Debate and Whistleblowers

- 18.7.4 2022 — Platform Governance Legislation

- 18.7.5 2023 — Online Safety Frameworks

- 18.7.6 2024 — Youth Social Media Laws

- 18.7.7 2025 — Enforcement Begins

- 18.7.8 2026 — Global Expansion and Infrastructure Debate

Abstract

Age verification laws introduced to protect children online are often debated as isolated regulatory interventions. This article argues that they represent something more significant: the early stages of a structural shift in the architecture of the internet.

Across multiple jurisdictions, governments are requiring digital platforms to prevent minors from accessing certain forms of online content. To enforce these obligations at scale, platforms must obtain reliable information about users’ identities, particularly their age. The technical mechanisms developed to provide this information increasingly involve operating system identity frameworks, cryptographic credentials, and digital identity infrastructure.

This article examines how these developments are embedding identity attributes into the technological layers through which digital services operate. The result is the emergence of what the paper describes as an identity-mediated internet architecture, in which digital environments are configured according to verified user attributes before interaction occurs.

Seen in isolation, age verification appears to be a narrow regulatory tool. Seen in architectural context, it represents the first widely deployed identity signal within a system that may increasingly organise digital environments around identity, governance, and algorithmic optimisation.

1. Introduction: The Sudden Appearance of an Age-Gated Internet

For most of the past three decades, the internet has operated on a remarkably simple social contract: access first, identity later, if at all. A user could arrive at a website, forum, or social platform with little more than a browser and a connection. Identity was optional, anonymity common, and verification rare. While certain domains (banking, government services, enterprise networks) required authentication, the broader informational web largely did not.

That assumption is now quietly dissolving.

Over the past eighteen to twenty-four months, a cluster of regulatory initiatives has begun appearing across multiple jurisdictions. Taken individually, these policies appear modest: a youth-protection law in one country, a platform safety regulation in another, a proposed age-verification scheme somewhere else. Yet when examined collectively, they reveal something more substantial. Governments around the world are beginning to restructure how access to digital services is mediated.

The shift is not happening through a single grand legislative project. Rather, it is emerging through a convergence of policy initiatives that (while framed differently in different regions) tend toward the same underlying mechanism: systems that determine who a user is, or at least what category of person they belong to, before granting access to online services.

Across jurisdictions, the new regulatory wave generally falls into several overlapping categories:

- Social media bans or restrictions for minors often prohibit accounts below a certain age or require parental consent.

- Operating-system-level age verification mandates require devices to expose age signals to apps and services.

- Platform “duty of care” regimes obliging digital platforms to actively protect minors from certain types of content or algorithmic exposure.

- Digital identity infrastructures, including government-backed identity wallets and verified credentials.

- Algorithmic safety regulations require platforms to adjust recommendation systems for children and vulnerable users.

Many of these laws are scheduled to come fully into force between 2025 and 2027, a relatively compressed implementation window for policies that potentially affect billions of internet users.

What makes the phenomenon particularly difficult to perceive in real time is that each policy is framed in narrowly local terms. A national parliament debates youth mental health. A state legislature responds to concerns about online pornography. A regulatory agency updates child-safety guidelines. Each measure appears to address a discrete problem within a specific jurisdiction.

Viewed from a distance, however, the pattern is unmistakable.

Across North America, Europe, Australia, and parts of Asia, policymakers are gradually constructing a regulatory environment in which access to digital platforms increasingly depends on the verification of certain user attributes, most commonly age, but potentially others in the future. The individual initiatives differ in scope and mechanism, yet they converge on the same operational premise: platforms cannot regulate user experiences without knowing something about the user.

The result is the quiet emergence of what might be called an age-gated internet.

This term should not be understood narrowly as referring only to pornography filters or parental controls. Instead, it describes a broader architectural transition in which online services increasingly require systems capable of determining whether a user is a child, a teenager, or an adult before deciding what content, features, or algorithmic pathways that user may access.

The deeper shift can be summarised in a single observation:

Multiple regions of the world are moving toward identity-mediated access to digital services.

In such a system, the internet does not simply respond to requests for information. It first classifies the requesting entity. Only then does it determine what the user is permitted to see, do, or participate in.

This development represents a subtle but profound departure from the original architecture of the web. The early internet assumed that information flows could be moderated after publication, through community moderation, legal intervention, or platform governance. The emerging model assumes something different: that digital environments must be structured around categories of users before interaction occurs.

Age verification is the most politically acceptable entry point for this transformation. Few policymakers are willing to defend unrestricted access to harmful material for children. As a result, legislation framed around child protection encounters relatively little resistance. Yet once the technical infrastructure for verifying age exists, it becomes possible to extend the same mechanisms to other forms of classification.

For this reason, the current regulatory wave should not be understood solely as a set of youth-protection measures. It is also the early stage of a broader reconfiguration of how identity, platforms, and governance intersect on the internet.

The transformation is still incomplete. Many of the systems required to implement these laws, verification technologies, operating-system interfaces, and digital credential frameworks remain under development. Legal challenges continue in several jurisdictions. Technical feasibility remains contested.

Nevertheless, the direction of travel is increasingly clear. The internet is beginning to evolve from an environment where users could arrive anonymously and negotiate identity later, into one where identity attributes increasingly shape access from the outset.

How that shift occurred, and what it ultimately implies, requires examining both the legislative landscape and the technological systems that are emerging to support it.

This paper argues that the infrastructure emerging around age verification and online safety regulation is quietly transforming the architecture of the internet and how contemporary child safety legislation is contributing to the emergence of the third architectural phase illustrated in Figure 1, “Evolution of Internet Architecture (1990–2035)”.

This article refers to this emerging system as an identity-mediated internet architecture. In such systems, identity attributes are embedded within operating systems, identity infrastructure, and digital platforms, enabling services to classify users and configure digital environments before interaction occurs.

The open web model, where anyone could access services anonymously, and moderation occurred after the fact, is being replaced by identity-mediated environments in which users are classified before interaction occurs.

1.1 Age Verification as the First Identity Attribute

One of the central claims of this paper is that age verification should not be understood primarily as a narrow child-safety policy. Rather, it represents the first large-scale deployment of a verified identity attribute within the operational infrastructure of the modern internet.

Historically, the web functioned largely without embedded identity infrastructure. Users arrived at websites anonymously or under pseudonyms, and platforms moderated behaviour and content after interaction occurred. Identity attributes were optional and generally confined to specific domains such as banking, government services, or enterprise networks.

Age verification changes this architectural assumption.

When platforms are required to determine whether a user is a minor before granting access to certain features or content, they must obtain a reliable signal about the user’s age category. Once that signal exists, it becomes reusable across multiple services and systems.

In other words, age becomes an identity attribute embedded within digital infrastructure.

The significance of this shift lies not in the attribute itself but in the precedent it establishes. Once infrastructure exists for verifying and transmitting one attribute, such as age, the same mechanisms can support additional attributes in the future. These may include jurisdiction, identity verification status, professional credentials, or other forms of eligibility verification.

Age verification is therefore best understood as the first widely deployed identity signal within a broader architectural transition toward identity-mediated digital environments.

2. The Legislative Landscape

If the emergence of an age-gated internet appears sudden, the explanation lies partly in how the relevant legislation has developed. Rather than arising from a single international framework or coordinated regulatory regime, the legal architecture has evolved through a patchwork of national and regional initiatives. Each jurisdiction has addressed what it perceives to be a local problem, youth mental health, online pornography, algorithmic harms, or platform accountability. Yet the resulting legal frameworks share a common feature: they all require some mechanism for determining the age, or at least the age category, of the user.

The cumulative effect is not merely regulatory overlap but a converging global architecture. Governments that differ widely in political systems, legal traditions, and technological ecosystems are nevertheless arriving at broadly similar policy conclusions. Platforms cannot meaningfully protect children online, regulators argue, unless they can determine whether the user is in fact a child.

The legislative landscape therefore represents the first concrete layer of the shift toward identity-mediated digital services.

2.1 United States

In the United States, the regulatory picture is unusually fragmented. Unlike the European Union or the United Kingdom, the federal government has not yet established a comprehensive national framework governing youth access to social media or age verification online. Instead, regulation has emerged through a growing number of state-level initiatives.

These laws vary significantly in design and scope. Some focus on social media access; others target adult content or platform duty-of-care requirements. Most combine elements of both.

Several states have enacted or proposed legislation within the past two years:

| State | Law / Policy | Approach |

|---|---|---|

| California | Digital Age Assurance Act | Age estimation / safety by design |

| Florida | HB3 | Social media age restrictions |

| Utah | Social Media Regulation Act | Parental consent |

| Nebraska | Youth online safety proposals | Parental consent model |

| Louisiana | Pornography age verification | Content access restriction |

| Mississippi | Pornography age verification | Content access restriction |

| Virginia | Youth social media rules | Age estimation/safety by design |

The underlying regulatory strategies differ. Some states attempt to prohibit minors from accessing specific services entirely, while others impose parental consent models or time-based restrictions. Still others focus on forcing platforms to implement age-verification mechanisms before allowing access to certain categories of content.

What unites these laws is the assumption that platforms must determine the age status of their users before enforcing regulatory obligations.

Many of these initiatives remain under constitutional challenge. Courts have been asked to consider whether mandatory age verification or youth social media restrictions violate First Amendment protections or impose unreasonable burdens on speech and privacy. Several early laws have already faced injunctions or revisions, suggesting that the American regulatory landscape will continue to evolve through litigation as much as legislation.

Nonetheless, the direction is clear. In the absence of federal policy, state governments have begun experimenting with multiple regulatory models simultaneously.

Despite this fragmentation, American regulation still has global consequences. Most of the world’s dominant technology platforms are headquartered in the United States, including Apple, Google, Meta, and Microsoft. If compliance with state-level age verification laws requires new forms of age signaling at the operating-system or app-store level, those mechanisms may be implemented globally rather than limited to individual jurisdictions. As a result, even decentralized state regulation in the United States may contribute to the emergence of shared technical infrastructure across the internet.

2.2 Europe

Europe presents a more structured legislative environment, largely due to the regulatory role of the European Union and the historically stronger relationship between digital governance and public policy.

Two major frameworks dominate the European approach: the EU Digital Services Act and the UK Online Safety Act.

The Digital Services Act (DSA) establishes a comprehensive regulatory regime for large online platforms operating within the European Union. Rather than mandating specific verification technologies, the DSA imposes a system of risk management obligations. Platforms must identify and mitigate systemic risks associated with their services, including risks affecting minors.

These obligations include requirements to:

- assess systemic platform risks

- implement safeguards protecting children

- mitigate exposure to harmful content

- increase transparency around algorithmic systems.

While the DSA does not explicitly mandate universal age verification, it effectively requires platforms to deploy mechanisms capable of distinguishing between adult and minor users if they are to satisfy their obligations under the law.

The United Kingdom has adopted a more direct regulatory approach through the Online Safety Act, passed in 2023. The law requires platforms hosting potentially harmful content to implement what regulators describe as “highly effective age assurance.”

This requirement applies particularly to categories of content considered dangerous for minors, including:

- pornography

- self-harm content

- suicide-related material

- eating disorder content.

In practice, this pushes platforms toward implementing age verification technologies or equivalent mechanisms capable of distinguishing minors from adults.

Beyond these two major frameworks, several European countries have begun introducing additional youth protection laws.

| Country | Policy Focus |

|---|---|

| France | Social media access requires parental consent under 15 |

| Spain | Proposed social media restrictions for minors |

| Denmark | Youth social media access limits under consideration |

| Norway | Proposed minimum social media age of 15 |

These initiatives vary widely in detail, but they share the same structural premise: platforms must possess reliable information about the age category of their users.

2.3 Australia

Among democratic nations, Australia has arguably taken the most aggressive legislative stance.

The country has introduced what is widely described as the world’s first nationwide social media ban for users under the age of sixteen. Under the proposed regulatory framework, major social platforms are required to ensure that minors below this threshold cannot create accounts.

In practical terms, this means platforms must implement mechanisms capable of verifying whether a user is over sixteen before allowing access.

Unlike parental consent models adopted elsewhere, the Australian system places primary responsibility on the platform rather than the parent or user. Failure to enforce the rule can result in significant financial penalties.

The policy has attracted both strong support and significant criticism. Supporters argue that it represents a necessary intervention to address youth mental health concerns and social media addiction. Critics contend that the law may be technically unenforceable and risks encouraging intrusive verification technologies.

Regardless of its long-term effectiveness, the Australian initiative marks an important regulatory milestone: it demonstrates the willingness of governments to move beyond content restrictions toward direct control of platform access based on user age.

2.4 Global Adoption

The trend is not limited to a handful of Western democracies. Age-verification frameworks or youth access restrictions are now appearing across a diverse range of political and technological environments.

Countries currently implementing or considering such systems include:

- United Kingdom

- Australia

- Germany

- France

- Spain

- Norway

- South Korea

- China

- United Arab Emirates

- Saudi Arabia

- Brazil

- Mexico

In some of these countries, age verification emerges through youth safety legislation. In others, it forms part of broader identity or telecommunications regulation. Several nations in Asia and the Middle East already operate digital systems in which real-name registration or national identity verification is required for certain online services, making age verification a relatively straightforward extension.

The resulting picture is not one of uniform policy but of converging regulatory strategies. Governments with very different political philosophies are increasingly arriving at the same operational requirement: if platforms must regulate content exposure for children, they must first determine whether the user is a child.

2.5 Major Age-Verification Laws

| Country | Law | Scope |

|---|---|---|

| United Kingdom | Online Safety Act | Harmful online content |

| Australia | Social media age ban | Users under 16 |

| France | Youth social media law | Users under 15 |

| United States (various states) | Multiple state laws | Social media and adult content |

Seen collectively, these laws reveal something important. Age verification is not emerging as a niche policy limited to pornography regulation or parental control systems. It is becoming a central component of the broader effort to regulate digital platforms.

Once this shift is recognised, the next question naturally arises: how did the policy momentum build so quickly?

To answer that, we must look back at the evolution of the idea itself.

3. Timeline of the Global Age-Verification Wave (2015–2026)

Technological transformations rarely arrive in a single decisive moment. More often, they accumulate through a sequence of experiments, failures, reframings, and institutional learning. The emergence of age verification as a central instrument of internet governance follows this pattern precisely. What now appears as a coordinated regulatory wave was, in reality, assembled over nearly a decade through a series of policy iterations.

Understanding this trajectory matters. The shift toward identity-mediated internet access did not arise suddenly from a single legislative innovation. It emerged gradually as policymakers attempted, often unsuccessfully, to regulate specific harms within the architecture of an open, largely anonymous network.

The period from roughly 2015 to 2026, therefore, represents a formative phase in which the concept of age verification evolved from a narrowly targeted tool for restricting adult content into a broader mechanism for structuring platform governance itself.

3.1 2015–2016: The Pornography Problem

The earliest modern age-verification proposals were almost entirely focused on a single issue: children accessing online pornography.

By the mid-2010s, policymakers across several countries had become increasingly concerned about the ease with which minors could access explicit material online. Parliamentary inquiries and public consultations began exploring mechanisms for restricting such access.

The technological proposals discussed at the time were relatively blunt instruments. Among the options considered were:

- credit-card verification systems that would restrict adult sites to users with valid payment credentials

- national identity databases capable of verifying a user’s age before granting access to restricted content

- age-check gateways operated by third-party verification providers.

These proposals reflected an early assumption that regulating specific types of websites would be sufficient. The broader architecture of the internet itself was not yet under consideration.

At this stage, age verification was seen primarily as a content gatekeeping mechanism, not as a general framework for regulating digital platforms.

3.2 2017: The UK Digital Economy Act

The first serious attempt to implement such a system arrived with the United Kingdom’s Digital Economy Act of 2017. The law required commercial pornography websites accessible from the UK to implement robust age verification mechanisms.

The policy was ambitious. Websites failing to comply could face:

- financial penalties

- payment processor restrictions

- potential ISP-level blocking.

For the first time, a national government attempted to mandate age verification across a major category of online services.

In principle, the system appeared straightforward. In practice, it proved extraordinarily difficult to implement.

3.3 2019: Collapse of the First Generation

By 2019, the British age-verification initiative had effectively collapsed.

Several problems became immediately apparent:

- Privacy concerns. Critics warned that centralised age-verification systems could create databases linking individuals to their consumption of explicit material, an obvious target for data breaches or abuse.

- Technical difficulties. Implementing verification across thousands of international websites proved far more complex than anticipated.

- Industry resistance. Many platforms argued that compliance requirements were unclear, expensive, or technically infeasible.

Facing mounting criticism and practical implementation barriers, the UK government ultimately abandoned the initiative.

At the time, the collapse was widely interpreted as a failure of age-verification policy itself. In retrospect, it represented something else: the failure of the first generation of regulatory thinking.

Rather than disappearing, the concept would soon return in a different form.

3.4 2020–2021: From Pornography to “Online Safety”

Following the failure of early porn-site verification schemes, policymakers reframed the problem.

The issue was no longer presented as access to pornography alone. Instead, regulators increasingly spoke about online harms affecting children more broadly. These included exposure to self-harm content, eating disorder communities, grooming risks, and algorithmically amplified harmful material.

Two major regulatory projects emerged from this reframing.

In the United Kingdom, the government began developing what would eventually become the Online Safety Bill, a far more comprehensive framework for regulating digital platforms.

At the European level, policymakers began drafting the Digital Services Act (DSA), which introduced a new model of systemic platform oversight.

The key conceptual shift was subtle but profound. Rather than attempting to block specific categories of websites, regulators began to ask whether platforms themselves should bear responsibility for identifying and mitigating risks affecting minors.

This question would reshape the entire regulatory landscape.

3.5 2022: The Rise of Platform Governance

By 2022, a new regulatory paradigm had begun to take shape.

Earlier approaches relied on content takedowns, removing harmful material once it had been identified. The emerging framework instead treated platforms as complex systems capable of generating societal risks through their design, algorithms, and recommendation structures.

Regulators, therefore, began focusing on systemic risk management.

Under this model, platforms must actively assess and mitigate risks generated by their services. If certain categories of content are harmful to children, the platform must prevent minors from encountering them.

But this logic immediately creates a practical requirement: platforms must know whether a given user is a minor.

Age verification, once confined to pornography regulation, suddenly became relevant to the governance of entire digital ecosystems.

3.6 2023: The Online Safety Act

The United Kingdom became the first country to formalize this shift through the Online Safety Act, passed in 2023.

The law requires platforms hosting potentially harmful content to implement “highly effective age assurance” systems. While the legislation does not mandate a specific technical solution, it establishes a clear expectation: platforms must be able to distinguish between adult and minor users.

This requirement applies to content involving:

- pornography

- self-harm or suicide

- eating disorders

- other forms of potentially harmful material.

The Act represents a turning point. Age verification was no longer a narrow tool targeting adult websites; it had become a foundational component of platform regulation.

3.7 2024: Expansion Across the United States

By 2024 the regulatory momentum began spreading rapidly across the United States.

A growing number of state legislatures introduced laws addressing youth access to online services. Some focused on pornography websites; others targeted social media platforms directly.

The result was a patchwork of state-level initiatives, each experimenting with different models of verification and restriction.

While the legal outcomes remained uncertain, many laws faced immediate constitutional challenges, the political signal was unmistakable. Age verification had become a mainstream regulatory idea rather than a fringe proposal.

At the same time, policymakers in several other countries began drafting similar laws, accelerating what now appears to be a global policy diffusion process.

3.8 2025: Enforcement Begins

By 2025 the first major enforcement mechanisms began to take effect.

Platforms operating in the United Kingdom and other jurisdictions started deploying practical verification systems. These included:

- facial age-estimation technology

- government ID verification services

- credit-card-based verification mechanisms.

These tools were far from perfect. Critics raised concerns about accuracy, privacy, and the potential normalization of biometric verification online. Nevertheless, the systems marked the first large-scale implementation of age verification across major digital platforms.

The infrastructure that had remained largely theoretical for several years was now being deployed in production environments.

3.9 2026: Global Expansion and Circumvention

By 2026 age verification had become a common feature of digital governance debates across multiple regions.

Some platforms chose to comply with new regulations. Others took a different approach: restricting access entirely in jurisdictions where compliance proved too burdensome.

At the same time, users began adapting to the new environment. Reports of circumvention methods increased, including the use of VPNs and other tools to bypass geographic restrictions.

The result is a regulatory ecosystem still in flux. Governments continue expanding age-verification requirements, platforms continue experimenting with implementation strategies, and users continue searching for ways around the restrictions.

What is clear, however, is that the idea itself has survived every early failure. The concept that once appeared limited to blocking pornography has evolved into a central instrument for governing digital platforms.

The question is no longer whether age verification will play a role in internet governance. The question is how far the architecture built around it will extend.

4. The ID Card Debate in the United Kingdom: A Long Political “Debaclate”

The debate over identity systems in the United Kingdom has a long and unusually contentious history. Unlike many European countries, where national identity cards are routine administrative tools, attempts to introduce comprehensive identity systems in Britain have repeatedly triggered political backlash and eventual abandonment. The result is a recurring policy “debacle”, or perhaps more fittingly, a debaclate: a cycle in which governments attempt to introduce identity infrastructure only for the proposals to collapse under political and institutional pressure.

This pattern has recurred across multiple generations of policy. Wartime identity cards were abolished in 1952 after public resistance; the Identity Cards Act 2006 was repealed in 2010 after fierce political opposition; and more recently, the government’s flagship digital identity programme, GOV.UK Verify was quietly abandoned after failing to achieve adoption. Understanding this history is important because it shapes how contemporary debates over digital identity, age verification, and online safety are perceived in the UK.

4.1 Wartime Identity Cards and Their Abolition

Britain first introduced a national identity card system during the Second World War.

The National Registration Act 1939 required residents to register with the government and carry identity cards. The system was intended primarily for wartime administration, including:

- rationing

- population management

- national security.

After the war the system remained in place, but public acceptance eroded. The decisive turning point came in 1950, when a motorist named Clarence Willcock refused to present his identity card to a police officer. The case ultimately reached the courts, and although Willcock lost the case, the judge strongly criticised the government for using wartime identity powers in peacetime.

Public opinion shifted rapidly. In 1952 the government abolished the identity card system, citing public hostility and declining administrative value.

This episode established an enduring political norm: identity cards were seen as incompatible with British civil liberties traditions.

4.2 The Modern Origins of the UK Identity Card Debate

Although the UK abolished wartime identity cards in 1952, the modern political controversy around identity infrastructure largely began decades later in the late 1990s and early 2000s. Following growing concerns about immigration control, welfare fraud, and national security, the Labour government under Tony Blair began exploring the possibility of introducing a modern national identity system.

In 2002 the government published a consultation paper proposing a national identity card scheme linked to a centralised population register. The proposal gained additional political momentum after the terrorist attacks of September 11, 2001, as policymakers increasingly framed identity infrastructure as part of a broader security strategy.

The eventual Identity Cards Act 2006 created a legal framework for biometric identity cards and a National Identity Register intended to hold personal and biometric information for residents of the UK. Supporters argued that the system could strengthen border control, reduce identity fraud, and simplify interactions with public services.

However, the proposal quickly became one of the most controversial digital policy initiatives in modern British politics. Civil liberties organisations, technology experts, and opposition politicians raised concerns about privacy, surveillance, cost, and the risks associated with maintaining large centralised identity databases.

When the coalition government took office in 2010, the programme was rapidly dismantled. The Identity Documents Act 2010 repealed the identity card legislation and ordered the destruction of the National Identity Register.

The collapse of the programme reinforced a powerful political narrative: large-scale identity infrastructure in the UK was both technically risky and politically toxic. This legacy continues to shape contemporary debates around digital identity, age verification, and online authentication systems.

4.3 The Identity Cards Act 2006

The debate resurfaced in the early 2000s following the September 11 attacks and rising concerns about terrorism and immigration control.

The Labour government under Tony Blair proposed a modern national identity system that would combine:

- biometric identity cards

- a national identity register

- integrated government databases.

The proposal was enacted through the Identity Cards Act 2006.

The system was ambitious. It envisioned:

- biometric identity cards

- a centralised identity database

- integration with passports and immigration systems.

However, the proposal faced sustained criticism from across the political spectrum. Critics argued that the system would:

- expand state surveillance

- create large government databases vulnerable to abuse

- erode long-standing traditions of anonymity in public life.

The programme also encountered significant technical and financial challenges.

When the coalition government took office in 2010, it moved quickly to repeal the legislation. The Identity Documents Act 2010 abolished the identity card programme and destroyed the national identity register.

The episode reinforced the perception that national identity systems were politically toxic in the UK.

4.4 The Limited Success of Digital Identity: the Government Gateway

Before the GOV.UK Verify programme, the UK government had already deployed a large-scale digital authentication system known as Government Gateway. Introduced in the early 2000s, Government Gateway provided login credentials for accessing a range of public services, most notably online tax filing through HM Revenue & Customs.

Millions of individuals and businesses used Government Gateway IDs to interact with government systems, making it one of the earliest widely adopted digital identity mechanisms in the UK. While the system functioned primarily as an authentication service rather than a full identity assurance framework, it demonstrated that citizens were willing to use digital credentials for government services. The later GOV.UK Verify initiative attempted to build on this foundation by introducing stronger identity verification through third-party providers, but it ultimately struggled to achieve the same level of adoption.

In hindsight, Government Gateway illustrated a recurring pattern in the UK: relatively successful authentication systems exist, but attempts to expand them into comprehensive national identity infrastructure tend to encounter political and institutional resistance.

4.5 The Failure of Digital Identity: GOV.UK Verify

Attempts to build digital identity infrastructure also struggled.

The government’s flagship digital identity system, GOV.UK Verify, was launched to allow citizens to access public services online using private-sector identity providers. However, the programme never achieved widespread adoption.

A parliamentary review later concluded that the system was failing to meet its objectives, citing low user uptake and technical limitations.

This failure reinforced skepticism toward government-led identity infrastructure.

4.6 Delegated Authority and the Next Generation of Digital Identity

Recent policy discussions have shifted toward a more decentralised model of digital identity.

The UK government’s digital verification services trust framework introduces the concept of delegated authority, where individuals can authorise trusted digital services to act on their behalf. This model attempts to avoid the political and technical pitfalls of earlier centralised identity schemes.

Recent consultations on digital identity policy suggest the government is again exploring ways to integrate identity systems into public services, including authentication for accessing government services online.

However, the history of repeated identity system failures means that such proposals are likely to remain politically sensitive.

The current UK digital identity programme is led within the Government Digital Service by the Digital Identity team, which has been tasked with developing the UK’s digital identity trust framework and related verification services.

4.7 Security, Requirements, and the Practical Challenges of Identity Infrastructure

One of the most common objections raised during debates about national identity systems is the fear that such systems will inevitably be hacked or compromised. Critics frequently argue that a centralised identity infrastructure would create a single point of failure in which sensitive personal data could be exposed if the system were breached.

However, this argument often overlooks an important factor: the security of a system is not determined solely by its architecture but also by the quality of its design and implementation. Secure systems are the result of careful engineering, rigorous policy design, and disciplined development practices. When identity systems are designed with clear security models, strong operational controls, and well-defined trust frameworks, they can be highly resilient.

For example, the Government Gateway, which has served as the authentication system for UK public services such as HMRC online tax filing since the early 2000s, has operated for many years without major public breaches of its core infrastructure. Systems like this demonstrate that secure digital authentication platforms are achievable when sufficient attention is given to design, governance, and operational security.

A second challenge arises from the political environment surrounding identity systems. Debates about civil liberties and surveillance often expand the scope of proposed systems by introducing increasingly complex requirements intended to satisfy multiple policy concerns simultaneously. These expanding requirements can significantly complicate the design and implementation of identity infrastructure, increasing cost and risk.

A third issue concerns institutional continuity. Large digital identity programmes often rely on the same organisational teams that worked on earlier projects. When previous programmes have struggled or failed, simply extending the same organisational structures into new initiatives may not address underlying problems in governance, design approach, or technical capability.

Finally, there is the tendency for governments to overload identity systems with additional features and services. Instead of implementing a simple and reliable identity credential, programmes are often expanded to include digital wallets, benefits integration, authentication frameworks, and multiple service layers. While these features may appear attractive from a policy perspective, they significantly increase system complexity and the risk of implementation failure.

International examples suggest a different approach. Countries such as Germany and France have implemented relatively straightforward identity systems that focus primarily on providing a reliable identity credential. Additional services can then be layered on top of this infrastructure over time, rather than being embedded into the initial design.

In practice, successful identity infrastructure often depends less on ambitious feature sets and more on disciplined engineering: clearly defined scope, strong security design, and incremental implementation.

The lessons from earlier identity programmes are directly relevant to the current wave of age-verification and digital identity proposals. If identity attributes are increasingly becoming part of the architecture of online systems, then the quality of the underlying identity infrastructure will matter enormously. Poorly designed systems risk creating fragile or intrusive identity mechanisms, while well-designed systems could provide narrowly scoped credentials, such as age verification, without exposing unnecessary personal data. In this sense, the debate about digital identity is not simply about whether identity systems should exist, but about how carefully they are engineered and how narrowly their scope is defined.

Government digital infrastructure has also faced criticism from oversight bodies. Reports from the UK National Audit Office have highlighted systemic cybersecurity risks and weaknesses in how data is shared across government systems, illustrating the institutional challenges involved in building large-scale digital infrastructure.

4.8 The European Contrast: France and Germany

The UK’s reluctance to adopt identity cards stands in contrast to many European countries.

In France, national identity cards have existed for decades and are widely accepted administrative tools. French citizens routinely use them for identification, travel within the European Union, and access to public services.

Similarly, Germany operates a national identity card system that includes digital identity functionality. The German electronic identity card allows citizens to authenticate themselves online for government and commercial services.

In these countries identity cards are largely viewed as administrative infrastructure rather than instruments of surveillance.

4.8.1 UK–France–Germany Identity Infrastructure Comparison Table

| Country | National ID Card | Population Registry | Digital Identity Integration | Public Perception |

|---|---|---|---|---|

| United Kingdom | No permanent national ID card (wartime cards abolished in 1952; 2006 ID scheme repealed in 2010) | No comprehensive population registry | Fragmented systems (Government Gateway, GOV.UK Verify, One Login) | Politically contentious; identity systems often framed as civil liberties issues |

| France | Long-standing national identity card (Carte Nationale d’Identité) | Centralised administrative records | Increasing integration with digital government services | Widely accepted as normal administrative infrastructure |

| Germany | Mandatory national identity card (Personalausweis) | Comprehensive municipal population registry (Melderegister) | Built-in electronic identity functionality for online authentication | Accepted as routine state infrastructure |

| Estonia (reference example) | Universal national digital ID | Fully integrated national registry | Extensive digital identity ecosystem for public and private services | Seen as core digital infrastructure |

Key point for readers:

- While many European states treat identity credentials as basic administrative infrastructure, the UK historically treats them as politically sensitive civil liberties questions.

4.9 Why the UK Debate Is Different

Several factors explain why identity systems are more controversial in the UK.

First, Britain lacks a tradition of national population registries common in many continental European states.

Second, the UK’s constitutional culture places strong emphasis on informal liberties and resistance to compulsory documentation.

Third, previous attempts to introduce identity systems have repeatedly collapsed, creating institutional memory and skepticism.

The result is a political environment in which identity infrastructure proposals are often interpreted through the lens of civil liberties debates rather than administrative efficiency.

4.10 From ID Cards to Digital Identity

Despite this historical resistance, identity systems are gradually re-emerging in new forms.

Modern digital identity proposals differ from earlier identity card schemes in several important ways:

- they often rely on distributed credentials rather than centralised databases

- they integrate with smartphones and online authentication systems

- they can support specific attributes, such as age verification, without revealing full identity.

These developments suggest that identity infrastructure may emerge incrementally rather than through a single national identity card programme.

In this sense, contemporary age verification systems may represent a partial reintroduction of identity mechanisms into the architecture of the internet.

4.11 And onto Age Verification

The long and contentious history of identity systems in the United Kingdom helps explain why contemporary debates about digital identity and age verification often become politically charged. Direct attempts to introduce comprehensive identity infrastructure (such as national ID cards or centralised digital identity systems) have repeatedly failed.

Yet identity attributes are now quietly reappearing through narrower regulatory mechanisms such as age verification and online safety laws. Rather than being introduced as a single national identity programme, identity credentials are emerging incrementally as functional components of digital systems. In this sense, the current wave of age verification may represent an indirect route toward forms of identity infrastructure that earlier political debates made difficult to introduce explicitly.

4.12 The Reality of Identity in the UK: De Facto Systems and Structural Gaps

Despite the UK’s historical resistance to national identity cards, identity infrastructure already exists in partial and fragmented forms. Several government identifiers function as de facto identity credentials, even if they were never designed as such.

One of the most prominent examples is the National Insurance number (NINO). Originally introduced to administer the social security system, the National Insurance number now serves as a widely used identifier across multiple areas of government interaction, including taxation, employment, and benefits administration. In practice, it functions as a quasi-identity number for many citizens.

However, the National Insurance system was not designed to operate as a universal identity credential. Not everyone possesses a permanent National Insurance number, temporary numbers exist for certain cases, and the system lacks the broader identity assurance features required for a modern identity infrastructure. These limitations illustrate the challenges of relying on systems that evolved for administrative purposes rather than being designed as identity platforms.

One possible alternative approach would be to build upon such existing identifiers rather than creating entirely new systems. Expanding an existing identifier into a properly designed digital identity credential could provide a simpler and more incremental path toward identity infrastructure than introducing a completely new national identity system.

At the same time, modern digital identity debates increasingly emphasise the importance of user control over identity data. Identity theorists such as Kim Cameron have articulated principles for identity systems that prioritise user autonomy and minimal disclosure. Cameron’s “Laws of Identity,” along with later developments such as Tim Berners-Lee’s work on Solid and personal data pods, propose identity architectures in which individuals retain control over their own credentials and personal data.

In such models, identity infrastructure does not mean centralised government ownership of personal information. Instead, individuals hold credentials that can be selectively presented to services when required. This approach aims to balance the administrative benefits of identity systems with stronger protections for privacy and personal autonomy.

Operational experience also highlights another dimension of the identity debate. During work on border control systems in the UK, conversations with enforcement teams and individuals encountered during border operations revealed a recurring observation: the relative fragmentation of identity management across UK institutions can make it easier for individuals to navigate between different systems without consistent identity verification.

In contrast, countries with more integrated identity infrastructures, such as France and Germany, often link government services more closely to verified identity credentials. While this does not eliminate irregular migration or identity fraud, it changes the structure of the administrative environment in which individuals operate.

These observations illustrate a broader point: the debate over identity systems in the UK is not simply about whether identity infrastructure should exist. In practice, identity mechanisms already exist in partial forms across different systems. The real policy question is how those mechanisms should be designed, governed, and integrated in ways that balance administrative effectiveness, security, and individual autonomy.

In effect, the National Insurance number already functions as a persistent identity attribute within parts of the UK administrative system: an early example of the kind of identity-linked infrastructure that is now beginning to appear more broadly across digital platforms.

Oversight reports have repeatedly highlighted the fragmentation of data systems across UK government departments. A National Audit Office review of cross-government data use noted significant challenges in linking and sharing identity information between agencies, reflecting the absence of a unified identity infrastructure.

And so, in this sense, the age-verification wave may represent not a departure from the UK’s long struggle over identity infrastructure, but its latest and more indirect iteration. Today, identity attributes are increasingly embedded invisibly within the infrastructure of digital platforms.

5. What People Think Is Happening

Whenever a technological system begins to change its underlying architecture, public understanding tends to organise itself around narratives. These narratives are rarely entirely wrong, but they are often incomplete. They simplify a complex transition into a story that can be easily communicated, debated, and politically mobilised.

The emerging age-verification regime is no exception. Across policy debates, media coverage, and online discourse, two dominant interpretations have crystallised. Each reflects a particular set of concerns, and each draws on real developments within the legislative landscape described in the previous section. Yet neither fully captures the structural transformation that is underway.

To understand what is actually happening, it is useful to examine these two narratives in turn.

5.1 Narrative A: Child Protection

The first narrative is the official one. Governments introducing age-verification laws almost invariably frame them as necessary interventions to protect children from a digital environment that has become increasingly difficult to regulate through traditional means.

Over the past decade, concerns about youth wellbeing online have intensified. Policymakers point to a range of issues that appear to correlate with the expansion of social media platforms and algorithmically curated digital spaces. Within this framing, age verification is not a mechanism of control but a tool for safeguarding vulnerable users.

The arguments generally revolve around several related claims:

- Protecting children from harmful content. Legislators frequently cite the ease with which minors can encounter pornography, self-harm material, or other forms of disturbing content online.

- Reducing social media addiction. A growing body of research suggests that algorithmic feeds may encourage compulsive engagement patterns, particularly among adolescents.

- Preventing grooming and exploitation. Law enforcement agencies have raised concerns about adults using online platforms to target minors.

- Addressing youth mental health challenges. Rising levels of anxiety, depression, and self-harm among teenagers are often linked, sometimes controversially, to social media exposure.

Within this narrative, the regulatory logic appears straightforward. If platforms are required to protect children from certain types of content or interaction, they must first be able to identify which users are children. Age verification, therefore, becomes a technical prerequisite for enforcing child-safety rules.

From this perspective, the emerging regulatory architecture is not fundamentally about controlling the internet but about adapting existing child protection principles to a digital environment where traditional safeguards have failed.

To many policymakers, the absence of reliable age verification online appears anomalous. In the physical world, access to restricted goods or environments, alcohol, and gambling venues, all adult entertainment already depend on some form of age verification. Extending similar safeguards to the internet seems, in this view, both logical and overdue.

Yet the simplicity of this reasoning also conceals important complexities.

5.2 Narrative B: The “Censorship Industrial Complex”

Opposition to age-verification laws has produced a very different narrative, particularly among civil liberties advocates, technologists, and segments of the online policy community.

Within this framework, age verification is not merely a child protection mechanism but the leading edge of a broader system of digital control. Critics argue that once governments or platforms possess the technical ability to verify user identity attributes, that capability can be extended to regulate speech, participation, and access to information.

This interpretation frequently invokes what some commentators describe as a “censorship industrial complex”, a network of governmental institutions, regulatory bodies, and platform governance mechanisms capable of shaping online discourse at scale.

The concerns associated with this narrative typically fall into three categories:

- The erosion of anonymity. Mandatory age verification could require users to provide personal information, identity documents, biometric data, or financial credentials before accessing online services. Critics argue that such systems fundamentally undermine the anonymous or pseudonymous participation that has historically characterised the internet.

- Expanded mechanisms of speech control. Once identity attributes are integrated into platform infrastructure, regulators may gain the ability to enforce differentiated rules for different categories of users. Age verification could thus become a gateway to broader systems of speech governance.

- Concentration of power. Implementing large-scale verification systems tends to favour major technology companies capable of absorbing compliance costs, potentially reinforcing the dominance of existing platforms.

For critics operating within this framework, the trajectory appears clear. Age verification begins with child safety, but it establishes a technical infrastructure that could support far more expansive forms of digital governance.

The argument is not that every policymaker intends such an outcome. Rather, it is that the underlying architecture of identity verification creates possibilities that extend well beyond the original regulatory rationale.

5.3 Narrative C: Advertising Markets and Bot Detection

A third narrative emerging in online discussions attributes the push toward identity verification to pressures within digital advertising markets.

Advertising systems depend heavily on measuring human attention. As automated content generation and bot traffic increase, distinguishing genuine human engagement from automated activity becomes more difficult. Advertisers increasingly demand assurance that the audiences they pay to reach represent real people rather than automated systems or engagement farms.

From this perspective, stronger identity signals could help platforms demonstrate the authenticity of their user base. Age or identity attributes would function as signals of human users, improving confidence in advertising metrics.

While this explanation does not appear to be the primary driver of recent legislation, it reflects a broader economic pressure within digital platforms: the need to maintain trust in the authenticity of online audiences.

5.4 Narrative D: Platforms Shifting Verification to Infrastructure

A fourth narrative focuses on the strategic incentives of large platforms.

Rather than verifying user identity directly within their own services, platforms increasingly advocate for age verification to occur at deeper layers of the digital ecosystem, such as operating systems or device-level services.

Under this model, identity attributes are verified once at the device or account level and then made available to applications through standardised interfaces. Platforms can then apply age-based rules without storing sensitive identity data themselves.

This approach reduces liability and operational complexity for individual platforms while embedding identity signals into shared digital infrastructure.

As a result, policy debates about age verification increasingly intersect with operating system providers, app stores, and device manufacturers rather than only with individual online services.

5.5 The Limits of These Narratives

Each of these interpretations captures a genuine dimension of the current policy debate. Governments are indeed motivated by concerns about child safety and youth wellbeing. At the same time, critics are correct that large-scale identity infrastructure introduces new capacities for monitoring and controlling digital participation.

Yet taken individually, both narratives obscure the deeper transformation now underway.

The child protection narrative focuses primarily on the policy justification for age verification. The censorship narrative focuses on its potential consequences. What neither fully addresses is the structural shift in how digital environments themselves are being organised.

At its core, the emerging system is less about removing particular pieces of content than about classifying users before interaction occurs. The architecture being built does not merely regulate information; it determines which categories of users may access which types of digital spaces in the first place.

In other words, the internet is gradually moving from a model in which:

- users enter a largely open network and content is moderated afterward

toward one in which:

- user attributes are determined first, and the platform environment is configured accordingly.

This shift is subtle, but it is significant. It marks a transition from content-based governance toward identity-mediated governance.

Understanding that transition requires looking not only at the policies themselves but also at the technological systems being constructed to implement them.

Although these narratives differ in their explanations, they converge on a similar structural outcome.

Whether driven by child safety concerns, regulatory pressure, advertising economics, or platform strategy, many proposals ultimately require systems capable of verifying and transmitting identity attributes across digital services.

This convergence suggests that the most significant transformation may not lie in the motivations behind age verification but in the architectural changes required to implement it.

5.6 Emerging Policy Debate

Public discussion surrounding age-verification laws has intensified as the first wave of policies begins to move from legislation to implementation.

Government regulators and child-safety advocates increasingly frame age verification as a necessary adaptation to a digital environment in which children encounter content and interactions that earlier regulatory frameworks were not designed to address. Several jurisdictions have begun enforcing social media age restrictions or platform safety obligations, and technology companies have responded by developing new technical tools such as operating-system age APIs, platform-level verification systems, and third-party identity services.

At the same time, privacy advocates, digital-rights organisations, and some researchers have raised concerns about the broader implications of these systems. Critics warn that large-scale age verification could introduce new forms of identity infrastructure into the architecture of the internet, potentially enabling expanded monitoring of online participation and reducing the range of spaces in which users can interact anonymously.

These debates often focus on the immediate effectiveness or risks of particular age-verification systems. Yet the deeper significance of the current moment may lie less in any single implementation and more in the structural trajectory that is beginning to emerge. Across different jurisdictions and technological ecosystems, similar patterns are appearing: regulatory pressure creates incentives to classify users, platforms seek scalable verification mechanisms, and identity attributes become embedded within technical infrastructure.

Understanding the long-term consequences of these developments therefore requires looking beyond the immediate policy controversy toward the architectural transformations they may produce.

5.7 Moderation vs. Environment Design

Seen from this perspective, the most important change is conceptual rather than technical.

The earlier internet treated harmful content primarily as a problem of moderation. The emerging model treats it as a problem of environment design.

Moderation removes or labels specific pieces of information after they appear. Environment design determines what information becomes visible to particular users in the first place.

The difference is subtle but profound. Moderation operates at the level of individual posts. Environment design operates at the level of the system itself.

Age verification, in this sense, is not simply a compliance mechanism. It is the key that allows platforms and regulators to begin reorganising the structure of the digital environment around categories of users.

And once that key exists, the architecture of the internet begins to change.

5.8 A Historical Precedent: Governance Migration in Telecommunications

The migration of governance toward deeper layers of digital infrastructure is not unprecedented.

During the development of modern telecommunications networks in the late twentieth century, regulators initially attempted to control specific services and applications operating on top of telephone networks. Over time, however, it became clear that regulating individual services was impractical as the number of services expanded.

Regulatory frameworks gradually shifted toward the infrastructure layer of telecommunications networks. Obligations were imposed on network operators to support capabilities such as lawful interception, emergency call routing, and number portability. By regulating the infrastructure through which services operated, policymakers could influence the entire communications ecosystem rather than attempting to regulate each service individually.

The current evolution of age verification policy may represent a similar shift. Rather than imposing requirements on individual websites or applications, policymakers are increasingly exploring mechanisms that operate at deeper layers of the digital stack, including operating systems and device-level services.

5.9 Motivations and Structural Outcomes

Public debates about age verification often focus on identifying the primary motivation behind these policies. Some observers emphasise child safety concerns, while others highlight regulatory pressure, platform liability, advertising economics, or strategic positioning by large technology companies.

These explanations may all capture part of the broader landscape.

However, the motivations behind policy proposals are often difficult to determine with certainty and may vary across jurisdictions and actors. Policymakers, companies, advocacy groups, and regulators frequently pursue different objectives simultaneously.

For this reason, the present analysis focuses less on determining the precise motivations behind age verification initiatives and more on examining the structural consequences of the mechanisms being proposed.

Regardless of the motivations driving these policies, implementing age verification at scale requires systems capable of reliably classifying users and communicating those classifications across digital services.

This requirement introduces new forms of identity infrastructure into the technical architecture of the internet.

The central question, therefore, is not only why these policies are being proposed, but also how the mechanisms used to implement them may reshape the structure of digital environments.

6. What Is Actually Being Built

The previous sections described two things: the laws that are emerging across jurisdictions, and the public narratives through which those laws are interpreted. Both are important, but neither fully explains the deeper transformation underway. To understand the significance of the current policy wave, it is necessary to step back from the legislation itself and examine the architecture of the internet systems these laws are gradually forcing into existence.

At its most fundamental level, the shift can be summarised in a single transition.

For most of the modern internet, governance occurred after information was published. Content appeared first; moderation happened later.

In simplified form, the old model looked something like this:

content → moderation

A user could create an account, often pseudonymously, and publish text, images, or video. Platforms might subsequently review the material, remove it, label it, or demote its visibility according to their policies. But the governing principle was reactive. The system intervened after content entered circulation.

The emerging architecture reverses this sequence.

Instead of waiting for content to appear and then deciding how to respond, the new model begins by determining who the user is, or more precisely, what category of user they belong to. Only once that classification has occurred does the system decide what content, features, or algorithmic pathways will be available.

The resulting logic looks like this:

identity → rules → algorithmic exposure

The distinction may appear subtle, but its consequences are significant. Under this architecture, the user’s attributes (age, identity status, or other credentials) shape the digital environment before interaction even begins.